Research shows how the brain’s motor signals sharpen our ability to decipher complex sound flows

By Shawn Hayward, The Neuro

By Shawn Hayward, The Neuro

Whether it is dancing or just tapping one foot to the beat, we all experience how auditory signals like music can induce movement. Now new research suggests that motor signals in the brain actually sharpen sound perception, and this effect is increased when we move in rhythm with the sound.

It is already known that the motor system, the part of the brain that controls our movements, communicates with the sensory regions of the brain. The motor system controls eye and other body part movements to orient our gaze and limbs to explore our spatial environment. However, because ears are immobile it was less clear what role the motor system plays in distinguishing sounds.

Benjamin Morillon, a researcher at the Montreal Neurological Institute of McGill University, designed a study based on the hypothesis that signals coming from the sensorimotor cortex could prepare the auditory cortex to process sound, and by doing so improve its ability to decipher complex sound flows like speech and music.

Working in the lab of MNI researcher Sylvain Baillet, he recruited 21 participants who listened to complex tone sequences and had to indicate whether a target melody was on average higher or lower-pitched compared to a reference. The researchers also played an intertwined distracting melody to measure the participants’ ability to focus on the target melody.

The exercise was done in two stages, one in which the participants were completely still, and another in which they tapped on a touchpad in rhythm with the target melody. The participants performed this task while their brain oscillations, a form of neural signaling brain regions use to communicate with each other, were recorded with magnetoencephalography (MEG).

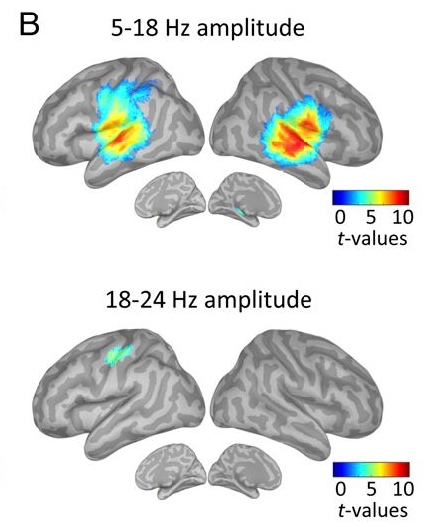

MEG millisecond imaging revealed that bursts of fast neural oscillations coming from the left sensorimotor cortex were directed at the auditory regions of the brain. These oscillations occurred in anticipation of the occurrence of the next tone of interest. This finding revealed that the motor system can predict in advance when a sound will occur and send this information to auditory regions so they can prepare to interpret the sound.

One striking aspect of this discovery is that timed brain motor signaling anticipated the incoming tones of the target melody, even when participants remained completely still. Hand tapping to the beat of interest further improved performance, confirming the important role of motor activity in the accuracy of auditory perception.

“A realistic example of this is the cocktail party concept: when you try to listen to someone but many people are speaking around at the same time,” says Morillon. “In real life, you have many ways to help you focus on the individual of interest: pay attention to the timbre and pitch of the voice, focus spatially toward the person, look at the mouth, use linguistic cues, use what was the beginning of the sentence to predict the end of it, but also pay attention to the rhythm of the speech. This latter case is what we isolated in this study to highlight how it happens in the brain.”

A better understanding of the link between movement and auditory processing could one day mean better therapies for people with hearing or speech comprehension problems.

“It has implications for clinical research and rehabilitation strategies, notably on dyslexic children and hearing-impaired patients,” says Morillon. “Teaching them to better rely on their motor system by at first overtly moving in synchrony with a speaker’s pace could help them to better understand speech.”

This study was published in the journal Proceedings of the National Academy of Sciences of the USA on Oct. 2, 2017. It was made possible with funding from a CIBC MNI post-doctoral fellowship for neuroimaging research to Morillon, and support to Baillet from the Killam Foundation, a Senior-Researcher Award from the Fonds de recherche du Québec – Santé (FRQS), a Discovery Grant from the Natural Science and Engineering Research Council of Canada, the National Institutes of Health (2R01EB009048-05), and a Platform Support Grant from the Brain Canada Foundation.